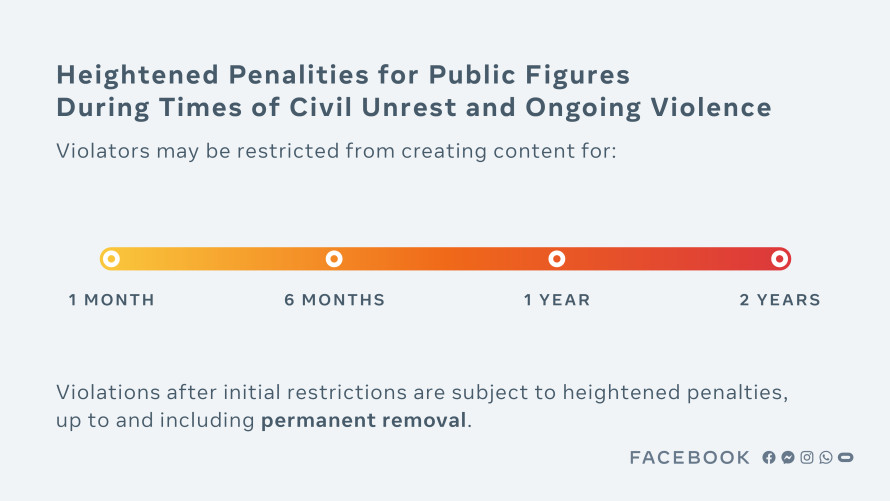

Donald Trump’s Facebook ban will last at least two years, the company announced on Friday. Facebook said that the former president’s actions on January 6, which contributed to a violent mob storming Capitol Hill and staging an insurrection that led to five deaths, “constituted a severe violation of our rules,” and that it was enacting this policy change as part of a new approach to public figures during civil unrest.

Facebook added that the two-year sanction constitutes a time period “long enough” to be a significant deterrent to Trump and other world leaders who might make similar posts, as well as enough to allow for a “safe period of time after the acts of incitement.” However, Facebook still has not made a final decision about the future of Trump’s account. The company said that after two years, it will again evaluate whether there’s still a risk to public safety and potential civil unrest.

“We know that any penalty we apply — or choose not to apply — will be controversial. There are many people who believe it was not appropriate for a private company like Facebook to suspend an outgoing President from its platform, and many others who believe Mr. Trump should have immediately been banned for life,” Nick Clegg, the company’s vice president of global affairs, said in a blog post, later adding: “The Oversight Board is not a replacement for regulation, and we continue to call for thoughtful regulation in this space.”

The announcement comes after Facebook’s oversight board, a group of policy experts and journalists the company has appointed to handle difficult content moderation questions, decided to uphold the platform’s freeze on the former president’s account. In May, the board ruled that Facebook should not have banned Trump indefinitely and would have to make a final decision within six months. The board also said that Facebook would have to clarify its rules about world leaders and the risk of violence, among other recommendations.

“The Oversight Board is reviewing Facebook’s response to the Board’s decision in the case involving former US President Donald Trump and will offer further comment once this review is complete,” the board’s press team said in response to Facebook’s Friday announcement. Later in the day, the board said in a statement it was “encouraged” by Facebook’s decision, and will monitor the company’s implementation.

Facebook now says it will fully implement 15 of the oversight board’s 19 recommendations. It also responded to the board’s demand that it provide more detail on its newsworthiness exception, a policy that Facebook has used — though rarely — to give politicians a free pass to post content that violates its rules. Now, Facebook says it will label posts that receive those exceptions, and will treat politicians’ posts more like those from regular users.

This set of decisions from Facebook has major implications not just for Trump’s account but also for national politics in the United States in the foreseeable future. At the same time, they signal that the company has remained steadfast in maintaining its power to decide what politicians can ultimately post to the platform. Facebook is providing more details about the rules it could use to punish politicians who violate its community guidelines, potentially increasing transparency. Still, it’s Facebook that has the final say over enforcement, including what’s considered newsworthy and remains on the platform versus what violates its community guidelines and gets removed.

Facebook still decides who gets a free pass from its rules

In Friday’s announcement, Facebook said it would change one of its most controversial policies: an allowance for content that breaks its rules but is important enough to the public discourse to remain online, often because it has been posted by a politician. Some call this the “newsworthiness exception” or the “world leader exception.” Now, Facebook is changing the rules so that the exemption seems more transparent and less unfair. But the company is still preserving its power to decide what happens the next time a politician posts something offensive or dangerous.

Trump was the inspiration for this exemption, which Facebook first created in 2015 after the former president (then a candidate) posted a video of himself saying Muslims should be banned from the United States. The newsworthiness exception was formally announced in 2016 and has long been controversial because it creates two types of users and posts: those who have to follow Facebook’s rules and those that don’t, and can post offensive and even dangerous content.

In 2019, the company added more detail. Nick Clegg, Facebook’s vice president for global affairs and communication, said that Facebook would presume anything a politician posted to its platform would be of interest to the public, and should stay up — “even when it would otherwise breach our normal content rules” — and as long as the public interest outweighed the risk of harm.

The policy also presumably serves as a convenient shield for Facebook to avoid getting into fights with powerful people (like the president of the United States).

For all the controversy and confusion it has produced, Facebook says the newsworthiness exception is rarely deployed. In 2020, Facebook’s independent civil rights audit reported that Facebook had only used the exception 15 times in the previous year, and only once in the US. Facebook amended its previous statement to the oversight board on Friday, saying it has only technically used the standard once in regard to Trump, over a video Trump posted of one of his 2019 rallies. Despite rarely being the beneficiary of the policy, the oversight board said back in May Trump’s account suspension meant Facebook should respond to the ongoing confusion.

Now, Facebook says politicians’ content will be analyzed for violations of its community guidelines — and weighed against the public interest — just like any other user. While that means the formalized global leader exception is gone, much of what actually remains up and off Facebook remains where it started: in Facebook’s hands.

Facebook won’t study how the platform contributed to January 6

In the aftermath of the deadly January 6 insurrection, many have pointed to the role social media platforms, including Facebook, played in exacerbating the violence. Critics of Facebook have said the insurrection showed how Facebook shouldn’t just reflect on its approach to Trump’s account, but also to the algorithms, ranking systems, and design feature choices that could have helped the rioters organize.

Even the Facebook oversight board, an independent body set up by Facebook to serve as a sort of court for litigation of the company’s most difficult content moderation decisions, recommended Facebook should take such a step. Earlier this week, allies of the Biden administration urged the company to follow that guidance and conduct a public-facing review of how the platform might have contributed to the insurrection.

Facebook has ample reason to believe their platform contributed to the events of Jan. 6. At a minimum they have an obligation to conduct a full, independent, thorough investigation, and to publish the results. It’s the least they should do.

— Katy Glenn Bass (@KGlennBass) June 4, 2021

But Facebook isn’t doing that, and it seems to be deflecting that responsibility. The company is instead pointing to a separate research effort focused on Facebook, Instagram, and the 2020 US election, which Facebook says could include studying what happened at the Capitol.

“The responsibility for January 6, 2021, lies with the insurrectionists and those who encouraged them,” the company said in its Friday decision, adding that independent researchers and politicians were best suited to researching the role of social media in the insurrection.

“We also believe that an objective review of these events, including contributing societal and political factors, should be led by elected officials,” wrote the company, adding that it would still work with law enforcement. Republicans, notably, have all but shut down the possibility of a bipartisan January 6 commission.

Facebook might never make a final ruling on Trump

Facebook is delaying, perhaps forever, a final decision on Trump himself. Right now, Facebook plans to suspend Trump for a minimum of two years, meaning he’d regain his account at the beginning of 2023. The ban does exclude Trump from using the platform to comment on the 2022 midterm elections, during which his posts could have boosted (or hurt) the hundreds of Republican candidates for the House.

Still, the two-year ban is not a final ruling as to whether Trump can return to Facebook. That means it’s still unclear if the former president will have access to the platform should he run for president again. It also leaves open the question of what it would really take for a politician to be permanently booted from the platform.

Many are frustrated that Facebook didn’t permanently ban Trump. It’s possible he could return to the platform in time to run for president in 2024, and Facebook obviously knows that. “If this gets 2 years, what can one possibly do to get a lifetime ban,” wrote one employee on an internal post, according to BuzzFeed. Civil rights groups reacting to the decision called Facebook’s ruling inadequate, and called Trump’s potential return to the social network a danger to democracy. Some think the decision yet again proves lawmakers need to step in and regulate social media.

Trump, for his part, seems extremely displeased with Facebook’s decision. “Facebook’s ruling is an insult to the record-setting 75M people, plus many others, who voted for us in the 2020 Rigged Presidential Election,” Trump said in a statement released Friday. “They shouldn’t be allowed to get away with this censoring and silencing and ultimately we will win. Our Country can’t take this abuse any more!”

It’s not clear what Trump returning to Facebook would even look like. Facebook has said the policy is in part meant to deter politicians from violating their rules again, but Facebook’s current suspension hasn’t stopped the former president from spreading election conspiracy theories on other platforms. Facebook implied Trump could possibly return when things are more stable, but it often appears that Trump himself is a primary source of instability.

It matters that Trump won’t be posting on Facebook until 2023, at the earliest, and that the company has some shiny new rules. But overall, Facebook is once again holding onto its power to decide what happens next.

Update, June 4, 6:10 pm ET: This piece has been updated with further analysis.

![iOS VPNs have leaked traffic for years, researcher claims [Updated]](https://whowillcare.net/wp-content/uploads/2022/08/ios-vpns-have-leaked-traffic-for-years-researcher-claims-updated-768x512.jpg)