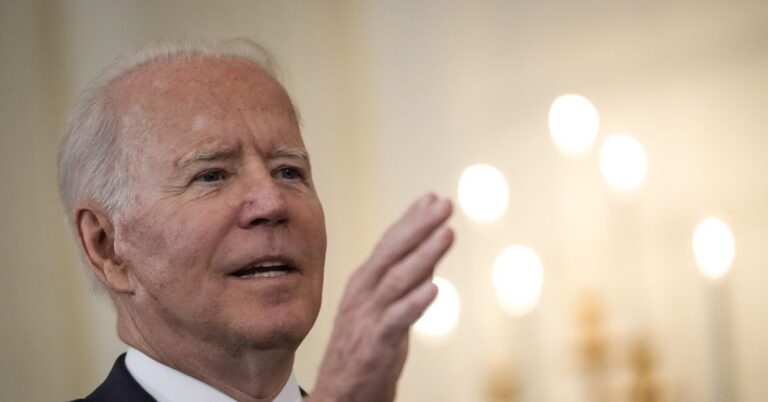

Yet this assumes that you can get hold of that training data, says Kautz. He and his colleagues at Nvidia have come up with a different way to expose private data, including images of faces and other objects, medical data, and more, that does not require access to training data at all.

Instead, they developed an algorithm that can re-create the data that a trained model has been exposed to by reversing the steps that the model goes through when processing that data. Take a trained image-recognition network: to identify what’s in an image, the network passes it through a series of layers of artificial neurons. Each layer extracts different levels of information, from edges to shapes to more recognizable features.

Kautz’s team found that they could interrupt a model in the middle of these steps and reverse its direction, re-creating the input image from the internal data of the model. They tested the technique on a variety of common image-recognition models and GANs. In one test, they showed that they could accurately re-create images from ImageNet, one of the best known image recognition data sets.

NVIDIA

As in Webster’s work, the re-created images closely resemble the real ones. “We were surprised by the final quality,” says Kautz.

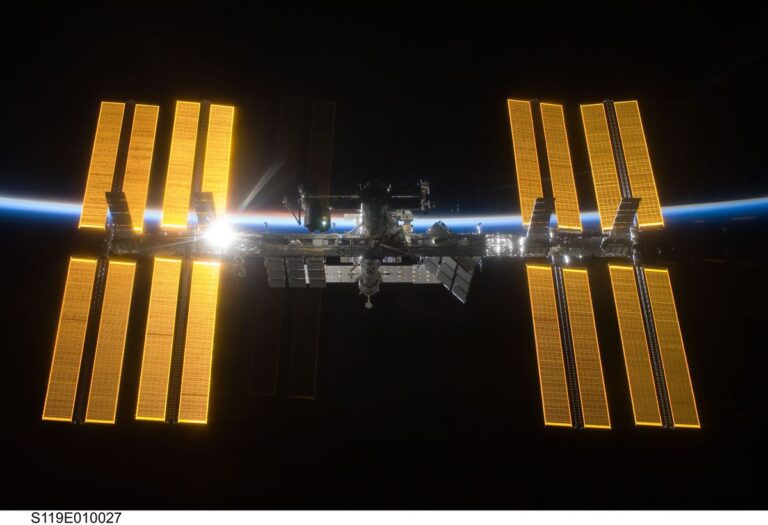

The researchers argue that this kind of attack is not simply hypothetical. Smartphones and other small devices are starting to use more AI. Because of battery and memory constraints, models are sometimes only half-processed on the device itself and sent to the cloud for the final computing crunch, an approach known as split computing. Most researchers assume that split computing won’t reveal any private data from a person’s phone because only the model is shared, says Kautz. But his attack shows that this isn’t the case.

Kautz and his colleagues are now working to come up with ways to prevent models from leaking private data. We wanted to understand the risks so we can minimize vulnerabilities, he says.

Even though they use very different techniques, he thinks that his work and Webster’s complement each other well. Webster’s team showed that private data could be found in the output of a model; Kautz’s team showed that private data could be revealed by going in reverse, re-creating the input. “Exploring both directions is important to come up with a better understanding of how to prevent attacks,” says Kautz.