Yet, while the speed and intention of this response to protect workers in the absence of an effective national-level US response was admirable, these Chinese companies are also tied up in forms of egregious human rights abuses.

Dahua is one of the major providers of “smart camp” systems that Vera Zhou experienced in Xinjiang (the company says its facilities are supported by technologies such as “computer vision systems, big data analytics and cloud computing”). In October 2019, both Dahua and Megvii were among eight Chinese technology firms placed on a list that blocks US citizens from selling goods and services to them (the list, which is intended to prevent US firms from supplying non-US firms deemed a threat to national interests, prevents Amazon from selling to Dahua, but not buying from them). BGI’s subsidiaries in Xinjiang were placed on the US no-trade list in July 2020.

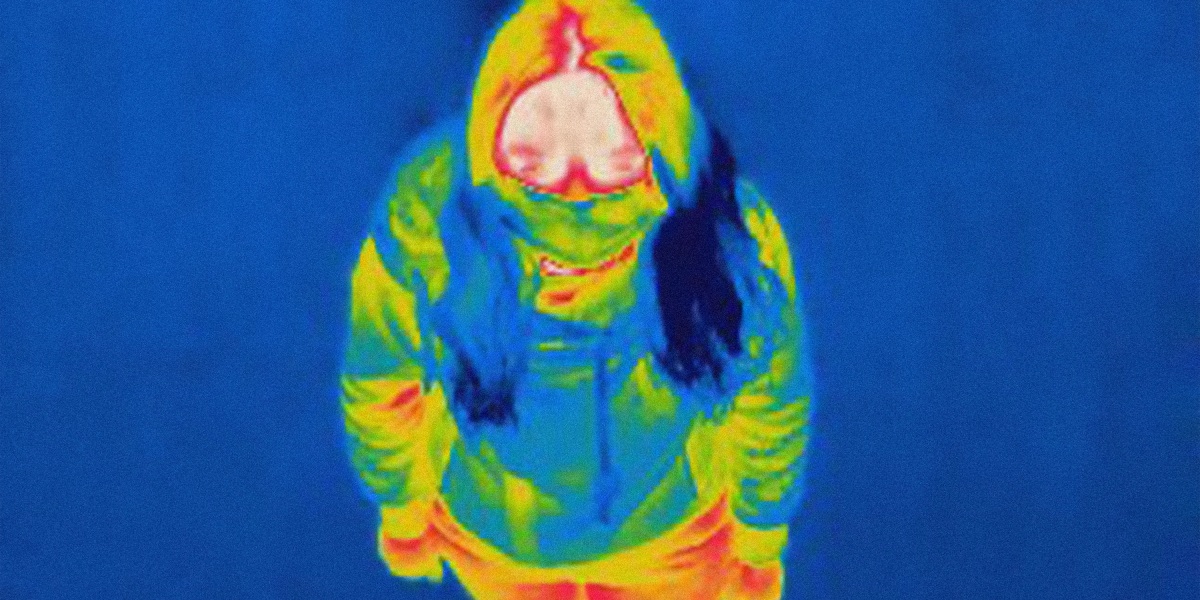

Amazon’s purchase of Dahua heat-mapping cameras recalls an older moment in the spread of global capitalism that was captured by historian Jason Moore’s memorable turn of phrase: “Behind Manchester stands Mississippi.”

What did Moore mean by this? In his rereading of Friedrich Engels’s analysis of the textile industry that made Manchester, England, so profitable, he saw that many aspects of the British Industrial Revolution would not have been possible without the cheap cotton produced by slave labor in the United States. In a similar way, the ability of Seattle, Kansas City, and Seoul to respond as rapidly as they did to the pandemic relies in part on the way systems of oppression in Northwest China have opened up a space to train biometric surveillance algorithms.

The protections of workers during the pandemic depends on forgetting about college students like Vera Zhou. It means ignoring the dehumanization of thousands upon thousands of detainees and unfree workers.

At the same time, Seattle also stands before Xinjiang.

Amazon has its own role in involuntary surveillance that disproportionately harms ethno-racial minorities given its partnership with US Immigration and Customs Enforcement to target undocumented immigrants and its active lobbying efforts in support of weak biometric surveillance regulation. More directly, Microsoft Research Asia, the so-called “cradle of Chinese AI,” has played an instrumental role in the growth and development of both Dahua and Megvii.

Chinese state funding, global terrorism discourse, and US industry training are three of the primary reasons why a fleet of Chinese companies now leads the world in face and voice recognition. This process was accelerated by a war on terror that centered on placing Uyghurs, Kazakhs, and Hui within a complex digital and material enclosure, but it now extends throughout the Chinese technology industry, where data-intensive infrastructure systems produce flexible digital enclosures throughout the nation, though not at the same scale as in Xinjiang.

China’s vast and rapid response to the pandemic has further accelerated this process by rapidly implementing these systems and making clear that they work. Because they extend state power in such sweeping and intimate ways, they can effectively alter human behavior.

Alternative approaches

The Chinese approach to the pandemic is not the only way to stop it, however. Democratic states like New Zealand and Canada, which have provided testing, masks, and economic assistance to those forced to stay home, have also been effective. These nations make clear that involuntary surveillance is not the only way to protect the well-being of the majority, even at the level of the nation.

In fact, numerous studies have shown that surveillance systems support systemic racism and dehumanization by making targeted populations detainable. The past and current US administrations’ use of the Entity List to halt sales to companies like Dahua and Megvii, while important, is also producing a double standard, punishing Chinese firms for automating racialization while funding American companies to do similar things.

Increasing numbers of US-based companies are attempting to develop their own algorithms to detect racial phenotypes, though through a consumerist approach that is premised on consent. By making automated racialization a form of convenience in marketing things like lipstick, companies like Revlon are hardening the technical scripts that are available to individuals.

As a result, in many ways race continues to be an unthought part of how people interact with the world. Police in the United States and in China think about automated assessment technologies as tools they have to detect potential criminals or terrorists. The algorithms make it appear normal that Black men or Uyghurs are disproportionately detected by these systems. They stop the police, and those they protect, from recognizing that surveillance is always about controlling and disciplining people who do not fit into the vision of those in power. The world, not China alone, has a problem with surveillance.

To counteract the increasing banality, the everydayness, of automated racialization, the harms of biometric surveillance around the world must first be made apparent. The lives of the detainable must be made visible at the edge of power over life. Then the role of world-class engineers, investors, and public relations firms in the unthinking of human experience, in designing for human reeducation, must be made clear. The webs of interconnection—the way Xinjiang stands behind and before Seattle— must be made thinkable.

—This story is an edited excerpt from In The Camps: China’s High-Tech Penal Colony, by Darren Byler (Columbia Global Reports, 2021.) Darren Byler is an assistant professor of international studies at Simon Fraser University, focused on the technology and politics of urban life in China.