When a demo of the software was released in late August, users quickly found that certain words—both explicit mentions of political leaders’ names and words that are potentially controversial only in political contexts—were labeled as “sensitive” and blocked from generating any result. China’s sophisticated system of online censorship, it seems, has extended to the latest trend in AI.

It’s not rare for similar AIs to limit users from generating certain types of content. DALL-E 2 prohibits sexual content, faces of public figures, or medical treatment images. But the case of ERNIE-ViLG underlines the question of where exactly the line between moderation and political censorship lies.

The ERNIE-ViLG model is part of Wenxin, a large-scale project in natural-language processing from China’s leading AI company, Baidu. It was trained on a data set of 145 million image-text pairs and contains 10 billion parameters—the values that a neural network adjusts as it learns, which the AI uses to discern the subtle differences between concepts and art styles.

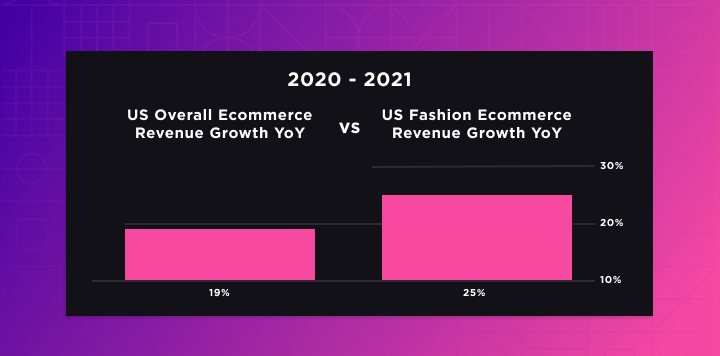

That means ERNIE-ViLG has a smaller training data set than DALL-E 2 (650 million pairs) and Stable Diffusion (2.3 billion pairs) but more parameters than either one (DALL-E 2 has 3.5 billion parameters and Stable Diffusion has 890 million). Baidu released a demo version on its own platform in late August and then later on Hugging Face, the popular international AI community.

The main difference between ERNIE-ViLG and Western models is that the Baidu-developed one understands prompts written in Chinese and is less likely to make mistakes when it comes to culturally specific words.

For example, a Chinese video creator compared the results from different models for prompts that included Chinese historical figures, pop culture celebrities, and food. He found that ERNIE-ViLG produced more accurate images than DALL-E 2 or Stable Diffusion. Following its release, ERNIE-ViLG has also been embraced by those in the Japanese anime community, who found that the model can generate more satisfying anime art than other models, likely because it included more anime in its training data.

But ERNIE-ViLG will be defined, as the other models are, by what it allows. Unlike DALL-E 2 or Stable Diffusion, ERNIE-ViLG does not have a published explanation of its content moderation policy, and Baidu declined to comment for this story.

When the ERNIE-ViLG demo was first released on Hugging Face, users inputting certain words would receive the message “Sensitive words found. Please enter again (存在敏感词,请重新输入),” which was a surprisingly honest admission about the filtering mechanism. However, since at least September 12, the message has read “The content entered doesn’t meet relevant rules. Please try again after adjusting it. (输入内容不符合相关规则,请调整后再试!)”