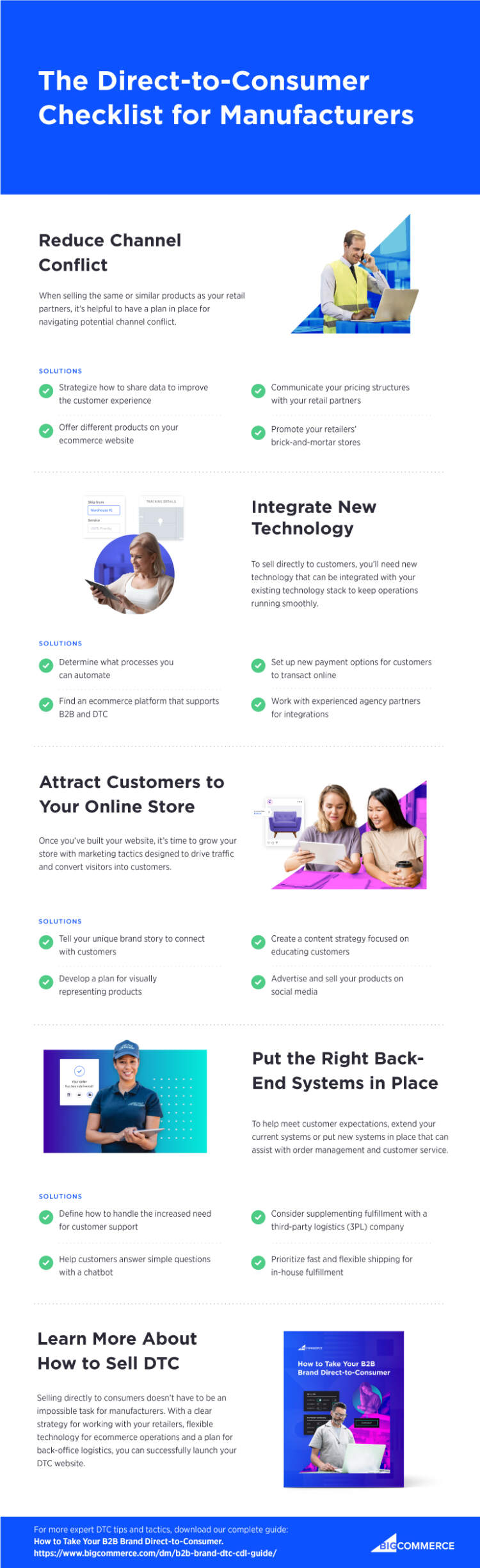

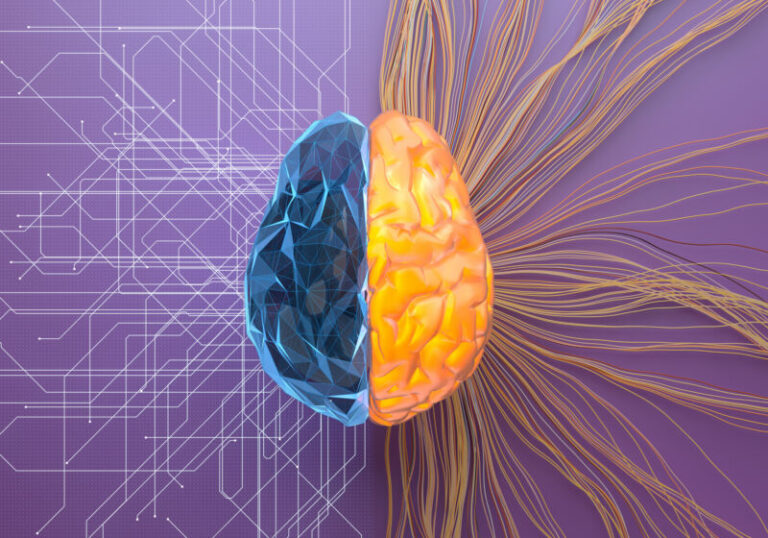

The last 20 months turned every dog into an amateur epidemiologist and statistician. Meanwhile, a group of bona fide epidemiologists and statisticians came to believe that pandemic problems might be more effectively solved by adopting the mindset of an engineer: that is, focusing on pragmatic problem-solving with an iterative, adaptive strategy to make things work.

In a recent essay, “Accounting for uncertainty during a pandemic,” the researchers reflect on their roles during a public health emergency and on how they could be better prepared for the next crisis. The answer, they write, may lie in reimagining epidemiology with more of an engineering perspective and less of a “pure science” perspective.

Epidemiological research informs public health policy and its inherently applied mandate for prevention and protection. But the right balance between pure research results and pragmatic solutions proved alarmingly elusive during the pandemic.

We have to make practical decisions, so how much does the uncertainty really matter?

Seth Guikema

“I always imagined that in this kind of emergency, epidemiologists would be useful people,” Jon Zelner, a coauthor of the essay, says. “But our role has been more complex and more poorly defined than I had expected at the outset of the pandemic.” An infectious disease modeler and social epidemiologist at the University of Michigan, Zelner witnessed an “insane proliferation” of research papers, “many with very little thought about what any of it really meant in terms of having a positive impact.”

“There were a number of missed opportunities,” Zelner says—caused by missing links between the ideas and tools epidemiologists proposed and the world they were meant to help.

Giving up on certainty

Coauthor Andrew Gelman, a statistician and political scientist at Columbia University, set out “the bigger picture” in the essay’s introduction. He likened the pandemic’s outbreak of amateur epidemiologists to the way war makes every citizen into an amateur geographer and tactician: “Instead of maps with colored pins, we have charts of exposure and death counts; people on the street argue about infection fatality rates and herd immunity the way they might have debated wartime strategies and alliances in the past.”

And along with all the data and public discourse—Are masks still necessary? How long will vaccine protection last?—came the barrage of uncertainty.

In trying to understand what just happened and what went wrong, the researchers (who also included Ruth Etzioni at the University of Washington and Julien Riou at the University of Bern) conducted something of a reenactment. They examined the tools used to tackle challenges such as estimating the rate of transmission from person to person and the number of cases circulating in a population at any given time. They assessed everything from data collection (the quality of data and its interpretation were arguably the biggest challenges of the pandemic) to model design to statistical analysis, as well as communication, decision-making, and trust. “Uncertainty is present at each step,” they wrote.

And yet, Gelman says, the analysis still “doesn’t quite express enough of the confusion I went through during those early months.”

One tactic against all the uncertainty is statistics. Gelman thinks of statistics as “mathematical engineering”—methods and tools that are as much about measurement as discovery. The statistical sciences attempt to illuminate what’s going on in the world, with a spotlight on variation and uncertainty. When new evidence arrives, it should generate an iterative process that gradually refines previous knowledge and hones certainty.

Good science is humble and capable of refining itself in the face of uncertainty.

Marc Lipsitch

Susan Holmes, a statistician at Stanford who was not involved in this research, also sees parallels with the engineering mindset. “An engineer is always updating their picture,” she says—revising as new data and tools become available. In tackling a problem, an engineer offers a first-order approximation (blurry), then a second-order approximation (more focused), and so on.

Gelman, however, has previously warned that statistical science can be deployed as a machine for “laundering uncertainty”—deliberately or not, crappy (uncertain) data are rolled together and made to seem convincing (certain). Statistics wielded against uncertainties “are all too often sold as a sort of alchemy that will transform these uncertainties into certainty.”

We witnessed this during the pandemic. Drowning in upheaval and unknowns, epidemiologists and statisticians—amateur and expert alike—grasped for something solid as they tried to stay afloat. But as Gelman points out, wanting certainty during a pandemic is inappropriate and unrealistic. “Premature certainty has been part of the challenge of decisions in the pandemic,” he says. “This jumping around between uncertainty and certainty has caused a lot of problems.”

Letting go of the desire for certainty can be liberating, he says. And this, in part, is where the engineering perspective comes in.

A tinkering mindset

For Seth Guikema, co-director of the Center for Risk Analysis and Informed Decision Engineering at the University of Michigan (and a collaborator of Zelner’s on other projects), a key aspect of the engineering approach is diving into the uncertainty, analyzing the mess, and then taking a step back, with the perspective “We have to make practical decisions, so how much does the uncertainty really matter?” Because if there’s a lot of uncertainty—and if the uncertainty changes what the optimal decisions are, or even what the good decisions are—then that’s important to know, says Guikema. “But if it doesn’t really affect what my best decisions are, then it’s less critical.”

For instance, increasing SARS-CoV-2 vaccination coverage across the population is one scenario in which even if there is some uncertainty regarding exactly how many cases or deaths vaccination will prevent, the fact that it is highly likely to decrease both, with few adverse effects, is motivation enough to decide that a large-scale vaccination program is a good idea.

An engineer is always updating their picture.

Susan Holmes

Engineers, Holmes points out, are also very good at breaking problems down into critical pieces, applying carefully selected tools, and optimizing for solutions under constraints. With a team of engineers building a bridge, there is a specialist in cement and a specialist in steel, a wind engineer and a structural engineer. “All the different specialties work together,” she says.

For Zelner, the notion of epidemiology as an engineering discipline is something he picked up from his father, a mechanical engineer who started his own company designing health-care facilities. Drawing on a childhood full of building and fixing things, his engineering mindset involves tinkering—refining a transmission model, for instance, in response to a moving target.

“Often these problems require iterative solutions, where you’re making changes in response to what does or doesn’t work,” he says. “You continue to update what you’re doing as more data comes in and you see the successes and failures of your approach. To me, that’s very different—and better suited to the complex, non-stationary problems that define public health—than the kind of static one-and-done image a lot of people have of academic science, where you have a big idea, test it, and your result is preserved in amber for all time.”